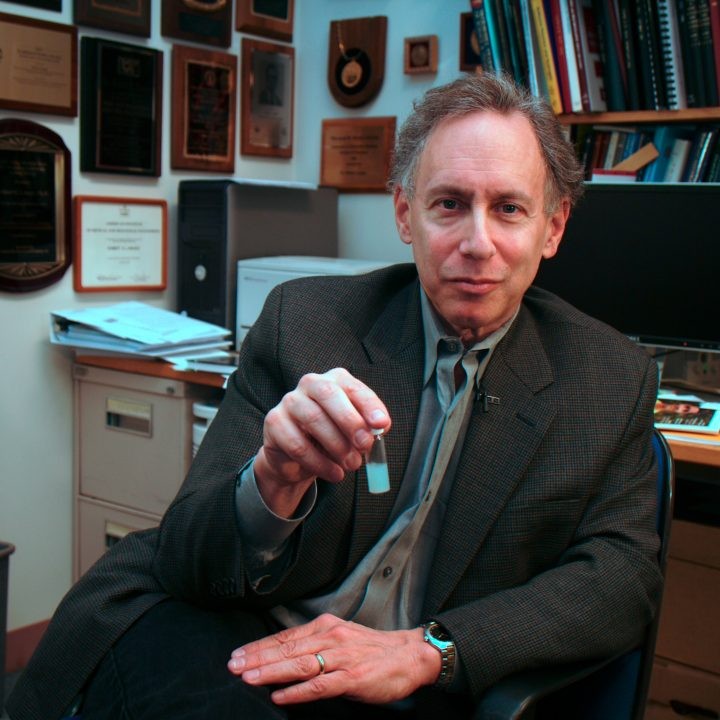

Dr. Robert Langer started his career working for a clinician named Judah Folkman.

“He was trying to figure out a way to stop blood vessels growing into tumors and my work was to isolate substances that might stop the blood vessels growing into cancerous tumors.”

To do that, Langer had to identify such substances and also conduct a bioassay that would test the effects of these substances in the body by putting a material containing the substance into the body right next to a tumor. It had to last for at least a month, if not longer, and not cause any harm to the body.

“The only way I could think to do this was to create a polymer that would slowly release the different molecules I was isolating. And we were fortunate, after quite a long period of work, to develop a whole series of slow release polymers that could release medications to the body over time.”

In fact polymers were used to encase drug molecules even before Langer’s work, but the problem was the size of the new drug molecules: they were simply too big to go through the small holes in polymers and there was nothing one could do about it, Langer was advised.

So instead of changing the laws of nature, Langer turned the question upside down: rather than putting the drug molecules into polymer, he layered polymer around the molecules in a three dimensional matrix structure that allowed the molecules to pass through slowly. By changing the molecules and the polymer, he was now able to use all kinds of molecules and control their release almost as he wanted.

The properties of polymers can be also modified by external stimuli such as ultrasound, electric pulses or magnetic fields to change the release rate of the drug. This has led to the development of increasingly sophisticated release systems and, when combined with electronics on the micro chip, the release rate can be programmed in advance so that the chip delivers a carefully-measured dose of the medicine precisely when it’s needed. Langer’s team has also developed an implantable chip that can also monitor a patient’s blood chemistry and deliver medication accordingly.

The polymer research led to the design of new kind of biomaterials that can be used as tissues. For example, emerging technologies enable the artificial production of skin, cartilage, liver or other cells. The idea behind tissue engineering is to make a temporary structure for the cells that can grow around and in within the polymer material. When the natural tissue is strong enough, the artificial “scaffold” dissolves.

Artificial skin is already in clinical trials and growing liver or pancreas organs from the patient’s own cells in may soon be a reality. Articifial tissues may soon help nerves to regenerate and thus help people who are paralyzed, too.

Dr. Langer’s research laboratory at MIT is the largest biomedical engineering lab in the world. And while the man himself can still be seen in the lab, now he is mainly guiding his more than 100 researchers.

“I mainly spend time thinking and trying to find the best directions for the research. I like also teaching, which I find very rewarding and stimulating, so I teach almost daily. Research is a long term undertaking, but teaching is very immediate – you can see right away that your students are learning.”

Dr. Langer has co-founded several small companies that have commercialized his ideas. The first, Enzytech, specialized in drug delivery and the use of enzyme and protein-based technologies in food additives.

Other notable companies are Mimeon, a company that focused on glycomics, the study of carbohydrates used for drug discovery, and MicroChips, a startup that is commercializing microchip drug delivery technology. Many of Langer’s patented inventions have been licensed and are being used globally.

Read more about Millennium Technology Prize winners

Read how 2008 Millennium Technology Prize innovation is used in creating new human parts.

Read more about the 2008 Millennium Technology Prize innovation.

Laureates

-

Optical fibre amplifier

The principle behind the optical fibre amplifier is quite simple: take some optical fibre that incorporates optical material with special properties and, using a laser, target light on it. Optical amplifiers can also be considered to be lasers without the feedback. While a laser’s purpose is to generate coherent light; the optical amplifier boosts the actual quantity of light transmitted.

Physically, optical amplifiers are just a laser source (i.e. a laser diode or an array of laser diodes) and can be regarded as specially-doped optical fibre, reeled into a coil with the optical isolators and filters required to shepherd the light. For scientists the challenge was a threefold one: finding the right doping material, incorporating it into the fibre and constructing a suitable pump laser.

The basic principle behind optical amplification has been known since the era of Albert Einstein. When certain dopant ions in a material are targeted using an intense laser source, their energy state jumps from lower (ground) to higher (excited). The ions spontaneously drop back to ground state by emitting the extra energy as a photon (or light quanta) that corresponds to the energy difference in levels.

When the correct energy levels are used, photons emitted by the dopant ions have the same wavelength as the signal light that needs to be amplified. If this signal is input to the medium then the excited ions are forced to release their emery by stimulated emission. The resulting output signal is therefore more powerful than the input signal. Amplification thus results from the stimulated emission of photons from dopant ions in the doped fibre. The dopant ions are maintained in the excited state by a pump laser, which creates an energy reservoir for the amplification process.

Erbium (Er) is a chemical element with an atomic number of 68, making it one of the heaviest elements in the periodic table before the line of radioactive metals. Discovered by Carl Gustaf Mosander in 1843 in Ytterby, Sweden, the salts of this rare earth metal are rose-colored (which is used in artistic glassware). Apart from optical telecommunications, the 1.55 microns laser wavelength of erbium ions is has the “eye-safe” property, which allows many non-hazardous applications such as telemetry, laser imaging, and surgery for skin, eye and ear.

When the core of a silica fibre is doped with trivalent Erbium ions (Er+3) and is efficiently pumped with a laser at either 980 nm or 1480 nm, it exhibits gain in the 1550 nm region. Erbium was perfect for silica-based optical fibre communications, because standard single-mode optical fibres have minimal loss at wavelengths of 1525–1565 nm. Erbium also works very well at 1570-1610 nm, another widely-used transmission window.

The most recent version of the optical amplifier is the Raman amplifier, in which the coil of erbium-doped fibre can be much shorter than in a traditional Erbium amplifier. At 500 mW or more than 1 W of optical power, the pumping power required for Raman amplification is higher than that required by the traditional Erbium amplifiers. The principal advantage of Raman amplification is its ability to provide distributed amplification within the transmission fibre, thereby increasing the spans between amplifier and regeneration sites.

-

Viterbi algorithm

(United States)

Viterbi’s innovation is the Viterbi algorithm, the mathematical formula that enables clear and practically error-free radio communication over long distances, from moving low power transmitters and receivers. The algorithm was published in 1967Teaching signal processing was difficult due to the complicated nature of the algorithms used, so Viterbi formulated a more simple way to explain the processing techniques. After realizing the importance of this algorithm, he submitted an article to the IEEE Transactions on Information Theory: “Error bounds for convolutional codes and an asymptotically optimum decoding algorithm”.

The paper was published in 1967 but the algorithm was considered not much more than elegant theoretical work until computing technology became powerful enough to handle the massive calculations needed to apply the work. Thus the Viterbi algorithm didn’t find widespread application until the move to digital and wireless communications. At that time nobody could imagine a general application for the algorithm, so Viterbi followed his lawyer’s advice and did not patent it.

The algorithm is essentially just a fast way of eliminating dead ends in the communication. The principle is simple, but the algorithm itself requires considerable computing power. Each bit in the digital information – 0 or 1 – has to be represented by four, eight or more code symbols. So, additional “redundant” information is added at the transmitter, in a process called error correction coding. The result coming into a receiver is a pulsing, miscellaneous stream of bits, ones and zeros.

The received signal is not a clear chain of zeros and ones but is code symbols from which the actual information bits can be reconstructed. Some individual bits can be dropped or distorted, because with the code symbols the missing bits can be guessed with high confidence. There are four states of ‘guess’: very sure, moderately sure, sort of sure and barely sure. The decoder in the receiver evaluates the certainty by comparing the result with neighboring bits and makes the best possible guess. The result is a clear, practically- undamaged message. The key is in a time series of incoming information, with each set of bits tagged in order of arrival.

The algorithm makes it possible to spread a carrier frequency over a wide area of the electromagnetic spectrum. Thousands of low emitting power transmitters can operate in same band range at the same time in small areas without interfering with each other, because their carrier frequencies are coded with different patterns. This principle was first used in military communications and is now the basis of the code division multiple access (CDMA) and UMTS digital cellular communications.

At the time that Dr. Viterbi published his algorithm, computers were not powerful enough to make all calculations required for decoding in real time, but with the growth in computing power, the Viterbi algorithm revolutionized the telecommunications environment by providing a useful tool for error-free communications.

-

DNA fingerprints

(Great Britain)

The birth of the DNA fingerprinting can be pinpointed exactly to the morning of September 10th, 1984. It was then that Jeffreys had a “eureka” moment” in his Leicester lab while examining an X-ray that formed part of a DNA experiment regarding genetic markers for inheritance patterns of illness.But what the experiment showed, unexpectedly, were the similarities and differences in his technician’s family’s DNA. Jeffreys quickly realized the import of this discovery of a biological identification mechanism. “That moment changed my life,” he says. And it led to the development of techniques that would fundamentally change this important area of science.

The first step is to extract DNA from the cells in a sample of blood, saliva, semen or other appropriate fluid or tissue. The traditional way to fingerprint DNA is by doing what is called a Southern blot. The DNA being analyzed must be separated from other material, cut into a few different-sized pieces using restriction enzymes, which are proteins that can cut double-stranded DNA without damaging the bases. Different length minisatellite alleles give differently-sized DNA fragments and can therefore be sized and compared by measuring the lengths of these fragments.

After this the DNA fragments are transferred from the fragile gel to a strong sheet of nylon or nitrocellulose paper membrane. The gel is discarded and the DNA is ready to be analyzed using a radioactive probe in a hybridization reaction by DNA polymerase.

A piece of X-ray film is exposed to the membrane after radioactive probing and fragments that have bound to the probe appear as black bands when the X-ray film is developed.

By measuring how far the fragments have moved through the gel one can calculate their sizes and therefore obtain the lengths of the different alleles. If one is checking family relationships, for example, one can see if a child has alleles the same size as one of those of either parent.

The original method of DNA fingerprinting was slow and required large quantities of quality DNA, while the new methods use smaller amounts of DNA and samples that may also be more degraded than those previously used.

DNA fingerprinting took a huge leap forward with the invention of the polymerase chain reaction. Both discriminating power and ability to recover information from very small initial samples was now possible by amplification of specific regions of DNA using a cycling of temperature and a thermostable polymerase enzyme along with fluorescently-labelled sequence-specific primers of DNA.

Recent innovations have included the creation of primers targeting polymorphic regions on the Y-chromosome, which allows resolution of multiple male profiles, or for cases in which a differential extraction is not possible. Y-chromosomes are paternally-inherited, so analysis can help in the identification of paternally-related males.